Реализация классификации текста свёрточной сетью на keras

Речь, как ни странно, пойдёт о использующем свёрточную сеть классификаторе текстов (векторизация отдельных слов — это уже другой вопрос). Код, тестовые данные и примеры их применения [1] — на bitbucket (уперся в ограничения размера от github и предложение применить Git Large File Storage (LFS), пока не осилил предлагаемое решение).

Наборы данных

Использованы конвертированные наборы: http://www.daviddlewis.com/resources/testcollections/reuters21578/ [2] (22000 записей), https://github.com/watson-developer-cloud/car-dashboard/blob/master/training/car_workspace.json [3] (530 записей), https://github.com/watson-developer-cloud/natural-language-classifier-nodejs/blob/master/training/weather_data_train.csv [4] (50 записей). Кстати, не отказался бы от подкинутого в комменты/ЛС (но лучше таки в комменты) набора текстов на русском.

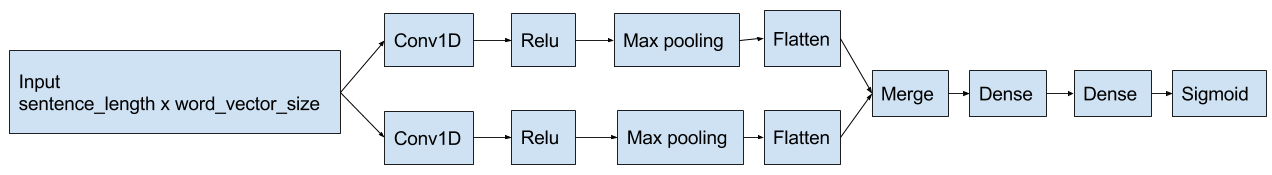

Устройство сети

За основу взята одна реализация описанной тут сети: https://arxiv.org/abs/1408.5882 [5]. Код использованной реализации на https://github.com/alexander-rakhlin/CNN-for-Sentence-Classification-in-Keras [6].

В моём случае — на входе сети находятся векторы слов (использована gensim-я реализация word2vec). Структура сети изображена ниже:

Вкратце:

- Текст представляется как матрица вида word_count x word_vector_size. Векторы отдельных слов — от word2vec, о котором можно почитать, например, в этом посте [7]. Так как заранее мне неизвестно, какой текст подсунет пользователь — беру длину 2 * N, где N — число векторов в длиннейшем тексте обучающей выборки. Да, ткнул пальцев в небо.

- Матрица обрабатывается свёрточными участками сети (на выходе получаем преобразованные признаки слова)

- Выделенные признаки обрабатываются полносвязным участком сети

Стоп слова отфильтровываю предварительно (на reuter-м dataset-е это не сказывалось, но в меньших по объему наборах — оказало влияние). Об этом ниже.

Установка необходимого ПО (keras/theano, cuda) в Windows

Установка для linux была ощутимо проще. Требовались:

- python3.5

- заголовочные файлы python (python-dev в debian)

- gcc

- cuda

- python-е библиотеки — те же, что и в списке ниже

В моём случае с win10 x64 примерная последовательность была следующей:

- Anaconda с python3.5 — https://www.continuum.io/downloads [8]

- Cuda 8.0 — https://developer.nvidia.com/cuda-downloads [9]. Можно запускать и на CPU (тогда достаточно gcc и следующие 4 шага не нужны), но на относительно крупных датасетах падание в скорости должно быть существенным (не проверял)

- Путь к nvcc добавлен в PATH (в противном случае — theano его не обнаружит)

- Visual Studio 2015 с C++, включая windows 10 kit (потребуется corecrt.h)

- Путь к cl.exe добавлен в PATH

- Путь к corecrt.exe в INCLUDE (в моём случае — C:Program Files (x86)Windows Kits10Include10.0.10240.0ucrt)

conda install mingw libpython— gcc и libpython потребуется при компиляции сетки- ну и

pip install keras theano python-levenshtein gensim nltk(возможно заведется и с заменой keras-го бэкенда с theano на tensorflow, но мной не проверялось) - в .theanorc указан следующий флаг для gcc:

[gcc]

cxxflags = -D_hypot=hypot

- Запустить python и выполнить

import nltk

nltk.download()

Обработка текста

На этой стадии происходит удаление стопслов, не вошедших в комбинации из «белого списка» (о нём далее) и векторизация оставшихся. Входные данные для применяемого алгоритма:

- язык — требуется nltk для токенизации и возвращения списка стопслов

- «белый список» комбинаций слов, в которых используются стопслова. Например — «on» отнесено к стопсловам, но [«turn», «on»] — уже другое дело

- векторы word2vec

Ну и алгоритм (вижу как минимум 2 возможных улучшения, но не осилил):

- Разбиваю входной текст на токены ntlk.tokenize-м (условно — «Hello, world!» преобразуется в [«hello», ",", «world», "!"])

- Отбрасываю токены, которых нет в word2vec-м словаре.

На самом деле — которых там нет и выделить схожий по расстоянию не вышло. Пока только расстояние Левенштейна, есть идея отфильтровывать токены с наименьшим расстоянием Левенштейна по расстоянию от их векторов до векторов, входящих в обучающую выборку - Выбрать токены:

- которых нет в списке стопслов (снизило ошибку на погодном датасете, но без следующего шага — очень испортило результат на «car_intents»-м).

- если токен в списке стопслов — проверить вхождение в текст последовательностей из белого списка, в которых он есть (условно — по нахождении «on» проверить наличие последовательностей из списка [[«turn», «on»]]). Если такая найдётся — всё же добавить его. Есть что улучшить — сейчас я проверяю (в нашем примере) наличие «turn», но оно же может и не относиться к данному «on».

- Заменить выбранные токены их векторами.

Кода нам, кода

import itertools

import json

import numpy

from gensim.models import Word2Vec

from pynlc.test_data import reuters_classes, word2vec, car_classes, weather_classes

from pynlc.text_classifier import TextClassifier

from pynlc.text_processor import TextProcessor

from sklearn.metrics import mean_squared_error

def classification_demo(data_path, train_before, test_before, train_epochs, test_labels_path, instantiated_test_labels_path, trained_path):

with open(data_path, 'r', encoding='utf-8') as data_source:

data = json.load(data_source)

texts = [item["text"] for item in data]

class_names = [item["classes"] for item in data]

train_texts = texts[:train_before]

train_classes = class_names[:train_before]

test_texts = texts[train_before:test_before]

test_classes = class_names[train_before:test_before]

text_processor = TextProcessor("english", [["turn", "on"], ["turn", "off"]], Word2Vec.load_word2vec_format(word2vec))

classifier = TextClassifier(text_processor)

classifier.train(train_texts, train_classes, train_epochs, True)

prediction = classifier.predict(test_texts)

with open(test_labels_path, "w", encoding="utf-8") as test_labels_output:

test_labels_output_lst = []

for i in range(0, len(prediction)):

test_labels_output_lst.append({

"real": test_classes[i],

"classified": prediction[i]

})

json.dump(test_labels_output_lst, test_labels_output)

instantiated_classifier = TextClassifier(text_processor, **classifier.config)

instantiated_prediction = instantiated_classifier.predict(test_texts)

with open(instantiated_test_labels_path, "w", encoding="utf-8") as instantiated_test_labels_output:

instantiated_test_labels_output_lst = []

for i in range(0, len(instantiated_prediction)):

instantiated_test_labels_output_lst.append({

"real": test_classes[i],

"classified": instantiated_prediction[i]

})

json.dump(instantiated_test_labels_output_lst, instantiated_test_labels_output)

with open(trained_path, "w", encoding="utf-8") as trained_output:

json.dump(classifier.config, trained_output, ensure_ascii=True)

def classification_error(files):

for name in files:

with open(name, "r", encoding="utf-8") as src:

data = json.load(src)

classes = []

real = []

for row in data:

classes.append(row["real"])

classified = row["classified"]

row_classes = list(classified.keys())

row_classes.sort()

real.append([classified[class_name] for class_name in row_classes])

labels = []

class_names = list(set(itertools.chain(*classes)))

class_names.sort()

for item_classes in classes:

labels.append([int(class_name in item_classes) for class_name in class_names])

real_np = numpy.array(real)

mse = mean_squared_error(numpy.array(labels), real_np)

print(name, mse)

if __name__ == '__main__':

print("Reuters:n")

classification_demo(reuters_classes, 10000, 15000, 10,

"reuters_test_labels.json", "reuters_car_test_labels.json",

"reuters_trained.json")

classification_error(["reuters_test_labels.json", "reuters_car_test_labels.json"])

print("Car intents:n")

classification_demo(car_classes, 400, 500, 20,

"car_test_labels.json", "instantiated_car_test_labels.json",

"car_trained.json")

classification_error(["cars_test_labels.json", "instantiated_cars_test_labels.json"])

print("Weather:n")

classification_demo(weather_classes, 40, 50, 30,

"weather_test_labels.json", "instantiated_weather_test_labels.json",

"weather_trained.json")

classification_error(["weather_test_labels.json", "instantiated_weather_test_labels.json"])

Здесь вы видите

- Подготовку данных

with open(data_path, 'r', encoding='utf-8') as data_source: data = json.load(data_source) texts = [item["text"] for item in data] class_names = [item["classes"] for item in data] train_texts = texts[:train_before] train_classes = class_names[:train_before] test_texts = texts[train_before:test_before] test_classes = class_names[train_before:test_before] - Создание нового классификатора

text_processor = TextProcessor("english", [["turn", "on"], ["turn", "off"]], Word2Vec.load_word2vec_format(word2vec)) classifier = TextClassifier(text_processor) - Его обучение

classifier.train(train_texts, train_classes, train_epochs, True) - Предсказание классов для тестовой выборки и сохранение пар «настоящие классы»-«предсказанные вероятности классов»

prediction = classifier.predict(test_texts) with open(test_labels_path, "w", encoding="utf-8") as test_labels_output: test_labels_output_lst = [] for i in range(0, len(prediction)): test_labels_output_lst.append({ "real": test_classes[i], "classified": prediction[i] }) json.dump(test_labels_output_lst, test_labels_output) - Создание нового экземпляра классификатора по конфигурации (dict, может быть сериализована в/десериализована из, например json)

instantiated_classifier = TextClassifier(text_processor, **classifier.config)

Выхлоп примерно таков:

C:Usersuserpynlc-envlibsite-packagesgensimutils.py:840: UserWarning: detected Windows; aliasing chunkize to chunkize_serial

warnings.warn("detected Windows; aliasing chunkize to chunkize_serial")

C:Usersuserpynlc-envlibsite-packagesgensimutils.py:1015: UserWarning: Pattern library is not installed, lemmatization won't be available.

warnings.warn("Pattern library is not installed, lemmatization won't be available.")

Using Theano backend.

Using gpu device 0: GeForce GT 730 (CNMeM is disabled, cuDNN not available)

Reuters:

Train on 3000 samples, validate on 7000 samples

Epoch 1/10

20/3000 [..............................] - ETA: 307s - loss: 0.6968 - acc: 0.5376

....

3000/3000 [==============================] - 640s - loss: 0.0018 - acc: 0.9996 - val_loss: 0.0019 - val_acc: 0.9996

Epoch 8/10

20/3000 [..............................] - ETA: 323s - loss: 0.0012 - acc: 0.9994

...

3000/3000 [==============================] - 635s - loss: 0.0012 - acc: 0.9997 - val_loss: 9.2200e-04 - val_acc: 0.9998

Epoch 9/10

20/3000 [..............................] - ETA: 315s - loss: 3.4387e-05 - acc: 1.0000

...

3000/3000 [==============================] - 879s - loss: 0.0012 - acc: 0.9997 - val_loss: 0.0016 - val_acc: 0.9995

Epoch 10/10

20/3000 [..............................] - ETA: 327s - loss: 8.0144e-04 - acc: 0.9997

...

3000/3000 [==============================] - 655s - loss: 0.0012 - acc: 0.9997 - val_loss: 7.4761e-04 - val_acc: 0.9998

reuters_test_labels.json 0.000151774189194

reuters_car_test_labels.json 0.000151774189194

Car intents:

Train on 280 samples, validate on 120 samples

Epoch 1/20

20/280 [=>............................] - ETA: 0s - loss: 0.6729 - acc: 0.5250

...

280/280 [==============================] - 0s - loss: 0.2914 - acc: 0.8980 - val_loss: 0.2282 - val_acc: 0.9375

...

Epoch 19/20

20/280 [=>............................] - ETA: 0s - loss: 0.0552 - acc: 0.9857

...

280/280 [==============================] - 0s - loss: 0.0464 - acc: 0.9842 - val_loss: 0.1647 - val_acc: 0.9494

Epoch 20/20

20/280 [=>............................] - ETA: 0s - loss: 0.0636 - acc: 0.9714

...

280/280 [==============================] - 0s - loss: 0.0447 - acc: 0.9849 - val_loss: 0.1583 - val_acc: 0.9530

cars_test_labels.json 0.0520754688092

instantiated_cars_test_labels.json 0.0520754688092

Weather:

Train on 28 samples, validate on 12 samples

Epoch 1/30

20/28 [====================>.........] - ETA: 0s - loss: 0.6457 - acc: 0.6000

...

Epoch 29/30

20/28 [====================>.........] - ETA: 0s - loss: 0.0021 - acc: 1.0000

...

28/28 [==============================] - 0s - loss: 0.0019 - acc: 1.0000 - val_loss: 0.1487 - val_acc: 0.9167

Epoch 30/30

...

28/28 [==============================] - 0s - loss: 0.0018 - acc: 1.0000 - val_loss: 0.1517 - val_acc: 0.9167

weather_test_labels.json 0.0136964029149

instantiated_weather_test_labels.json 0.0136964029149

По ходу экспериментов с стопсловами:

- ошибка в reuter-м наборе оставалась сравнима вне зависимости от удаление/сохранения стопслов

- ошибка в weather-м — упала с 8% при удалении стопслов. Усложнение алгоритма не повлияло (т.к. комбинаций, при которых стопслово таки нужно сохранить тут нет).

- ошибка в car_intent-м — возросла примерно до 15% при удалении стопслов (например, условное «turn on» урезалось до «turn»). При добавлении обработки «белого списка» — вернулась на прежний уровень

Пример с запуском заранее обученного классификатора

Собственно, свойство TextClassifier.config — словарь, который можно отрендерить, например, в json и после восстановления из json-а — передать его элементы в конструктор TextClassifier-а. Например:

import json

from gensim.models import Word2Vec

from pynlc.test_data import word2vec

from pynlc import TextProcessor, TextClassifier

if __name__ == '__main__':

text_processor = TextProcessor("english", [["turn", "on"], ["turn", "off"]],

Word2Vec.load_word2vec_format(word2vec))

with open("weather_trained.json", "r", encoding="utf-8") as classifier_data_source:

classifier_data = json.load(classifier_data_source)

classifier = TextClassifier(text_processor, **classifier_data)

texts = [

"Will it be windy or rainy at evening?",

"How cold it'll be today?"

]

predictions = classifier.predict(texts)

for i in range(0, len(texts)):

print(texts[i])

print(predictions[i])

И его выхлоп:

C:Usersuserpynlc-envlibsite-packagesgensimutils.py:840: UserWarning: detected Windows; aliasing chunkize to chunkize_serial

warnings.warn("detected Windows; aliasing chunkize to chunkize_serial")

C:Usersuserpynlc-envlibsite-packagesgensimutils.py:1015: UserWarning: Pattern library is not installed, lemmatization won't be available.

warnings.warn("Pattern library is not installed, lemmatization won't be available.")

Using Theano backend.

Will it be windy or rainy at evening?

{'temperature': 0.039208538830280304, 'conditions': 0.9617446660995483}

How cold it'll be today?

{'temperature': 0.9986168146133423, 'conditions': 0.0016815820708870888}

И да, конфиг сети обученной на датасете от reuters — тут https://drive.google.com/file/d/0B7cY3wBgM-aBWGh3NmFjSGVHVzA/view?usp=sharing [10]. Гигабайт сетки для 19Мб датасета, да :-)

Автор: alex4321

Источник [11]

Сайт-источник PVSM.RU: https://www.pvsm.ru

Путь до страницы источника: https://www.pvsm.ru/mashinnoe-obuchenie/209388

Ссылки в тексте:

[1] Код, тестовые данные и примеры их применения: https://bitbucket.org/alex43210/pynlc

[2] http://www.daviddlewis.com/resources/testcollections/reuters21578/: http://www.daviddlewis.com/resources/testcollections/reuters21578/

[3] https://github.com/watson-developer-cloud/car-dashboard/blob/master/training/car_workspace.json: https://github.com/watson-developer-cloud/car-dashboard/blob/master/training/car_workspace.json

[4] https://github.com/watson-developer-cloud/natural-language-classifier-nodejs/blob/master/training/weather_data_train.csv: https://github.com/watson-developer-cloud/natural-language-classifier-nodejs/blob/master/training/weather_data_train.csv

[5] https://arxiv.org/abs/1408.5882: https://arxiv.org/abs/1408.5882

[6] https://github.com/alexander-rakhlin/CNN-for-Sentence-Classification-in-Keras: https://github.com/alexander-rakhlin/CNN-for-Sentence-Classification-in-Keras

[7] в этом посте: https://habrahabr.ru/post/253227/

[8] https://www.continuum.io/downloads: https://www.continuum.io/downloads

[9] https://developer.nvidia.com/cuda-downloads: https://developer.nvidia.com/cuda-downloads

[10] https://drive.google.com/file/d/0B7cY3wBgM-aBWGh3NmFjSGVHVzA/view?usp=sharing: https://drive.google.com/file/d/0B7cY3wBgM-aBWGh3NmFjSGVHVzA/view?usp=sharing

[11] Источник: https://habrahabr.ru/post/315118/?utm_source=habrahabr&utm_medium=rss&utm_campaign=best

Нажмите здесь для печати.